(This post originally appeared on the Coast and Ocean Collective Blog)

In May I went to my first annual meeting of CSDMS— the Community Surface Dynamics Modeling System. It was great to see old friends and meet new ones.

CSDMS is involved in a range of different projects and provides a suite of different services to the earth surface processes modeling community. You might know about CSDMS from its model repository (with metadata and links to source code) and the handy tools developed by CSDMS to link models together. For more background on CSDMS, check out their webpage.

One nice aspect of CSDMS is that the keynotes and panels are recorded and put on YouTube, and many poster presenters upload PDFs of their poster. I have spent a few hours skimming through these videos and PDFs from past meetings — lots of interesting ideas.

The annual meeting theme this year was ‘Bridging Boundaries’, and there was a range of interesting talks, posters, clinics, breakout sessions, and panels. I want to just mention a few highlight during those 3 packed days.

- I really enjoyed the wide range of keynotes. Two particularly interesting ones were:

- Zoltán Sylvester’s presentation on meanderpy. Its always amazing to see simple rules reproduce patterns on earth. The visuals in the presentation are stunning. And check out the well-documented python model

- I really enjoyed Boyana Norris’s talk on code performance issues (especially the end, with analysis of commit messages and software forum posts.

- I really enjoyed the 2 panel discussions:

- The first was the machine learning and artificial intelligence panel with both academic and industry representatives.

- On the last day, Lejo Flores moderated a panel on diversity equity and inclusion. This panel was particularly interesting, with many great insights. I recommend watching it.

- A real highlight for me was Dan Buscombe’s deep learning clinic. Dan walked us through a comprehensive Jupyter notebook based on his work on pixel-scale image classification. It was great to hear Dan explain his workflow, and it was great to meet him in person. I urge you to check out his work!

- There were too many amazing posters to cover in one post. I recommend scrolling through the abstracts and poster pdfs online.

- I live-tweeted the 3rd day through the CSDMS and AGU EPSP twitter accounts. This was really fun and I’m grateful for the opportunity from the AGU EPSP social media team.

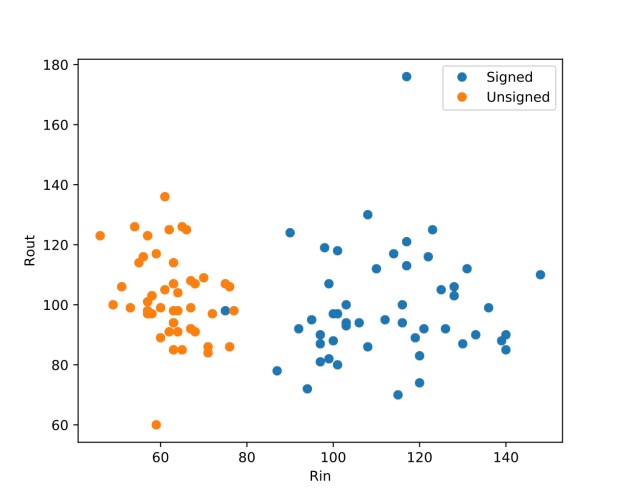

- I am very grateful to CSDMS for inviting me to give a keynote this year — it was exciting to share my ideas with such a talented group of people. My talk — video, slides — focused on ML work that I have done with the Coast and Ocean Collective (and others), specifically work on swash, runup, ‘hybrid’ models, and the ML review paper that was just published.

- Lastly, I ate a lot of (good) pizza.